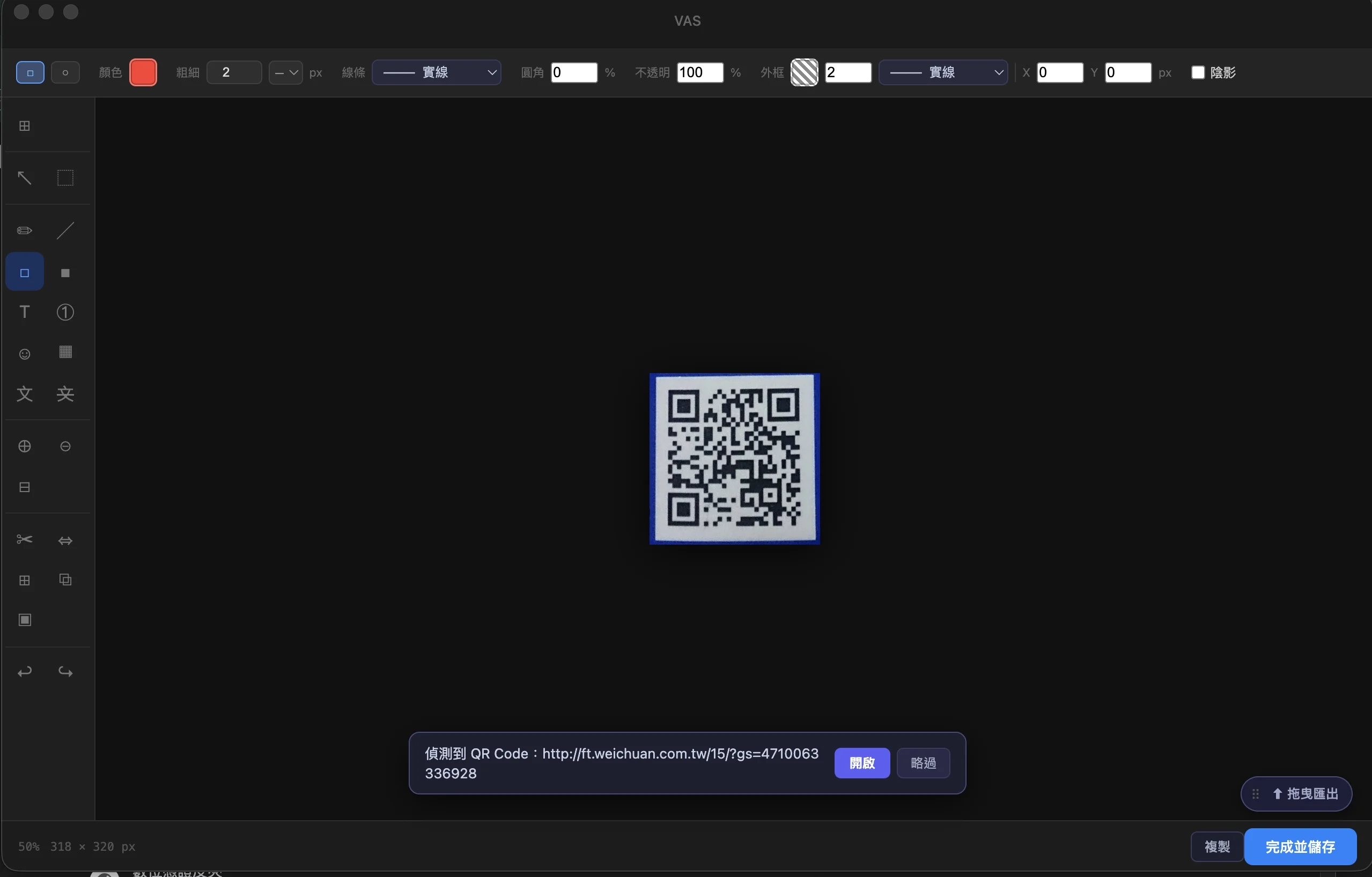

QR Code detected — but how confident is it?

Scanning a QR code seems like a binary outcome — found or not found. But VAS sees it differently: it reads user intent, then decides what to do next based on that intent.

This is also human-AI collaboration: the user speaks through how they frame the screenshot, and the tool understands. No language, no buttons, no menus — how you frame it is what you want. The tool isn't reading commands; it's reading behaviour.

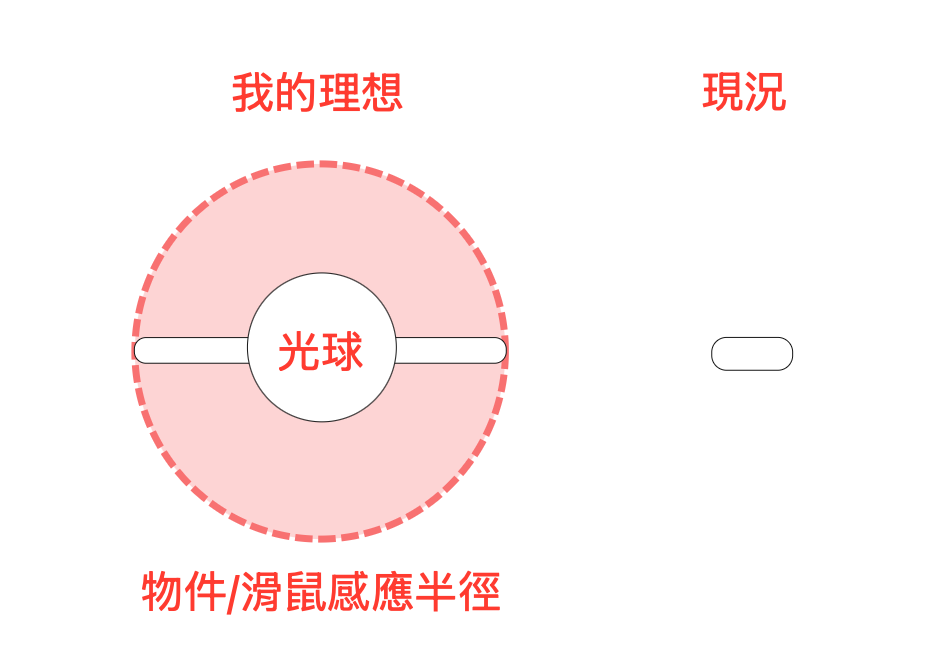

We reasoned that when a user wants accurate QR Code detection, they'll naturally frame it as completely and exclusively as possible within the selection box — that act itself is a declaration of intent. The larger the QR Code fills the selected area, the more accurate the recognition, the higher the confidence, and the more directly the tool can act. A wordless understanding forms between user and tool.

A tool shouldn't pretend to be certain when it isn't. When intent is clear, it opens the link directly; when confidence is ambiguous, it asks if you'd like to open it; when confidence is too low, it silently opens the editor and hands over to you. Behind each threshold is an honest design: knowing how much it knows.